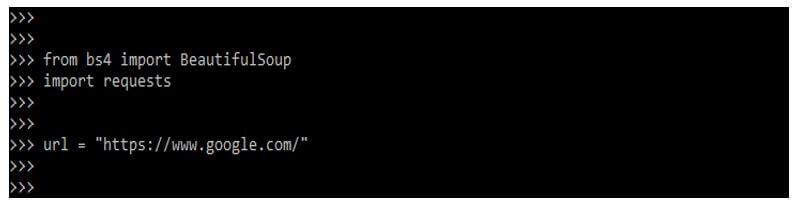

# this gives us a whole HTML node but we can also just select the text: # for example title is under `head` node: # then we can navigate the html tree via python API: Let's start with a very simple piece of HTML data that contains some basic elements of an article: title, subtitle, and some text paragraphs: from bs4 import BeautifulSoup Now, that we got our soup hot and ready let's see what it can do! Basic Navigation

# html5lib - parses pages same way modern browser does Soup = BeautifulSoup(html, "html.parser") # automatically select the backend (not recommended as it makes code hard to share)

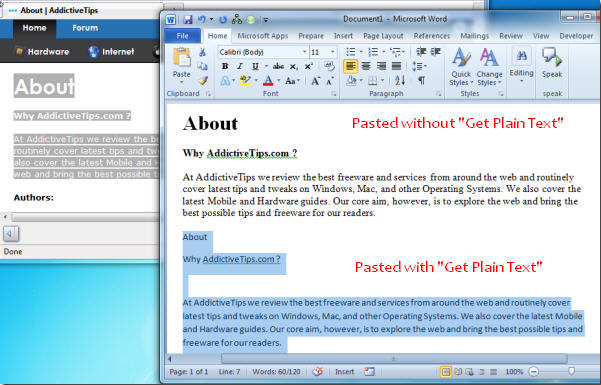

The backend can be chosen every time we create a beautiful soup object: from bs4 import BeautifulSoup As for html5lib it's mostly best for edge cases where html5 specification compliance is necessary. To summarize, it's best to stick with lxml backend because it's much faster, however html.parser is still a good option for smaller projects. html5lib - another parser written in python that is intended to be fully html5 compliant.lxml - C-based library for HTML parsing: very fast, but can be a bit more difficult to install.html.parser - python's built-in parser, which is written in python meaning it's always available though it's a bit slower.Tip: Choosing a Backendīs4 is pretty big and comes with several backends that provide HTML parsing algorithms that differ very slightly: It also comes with utility functions like visual formatting and parse tree cleanup. Using it we can navigate HTML data to extract/delete/replace particular HTML elements. Parsing HTML with BeautifulSoupīeautifulsoup is a python library that is used for parsing HTML documents. With this basic understanding, we can see how python and beautifulsoup can help us traverse this tree to extract the data we need. Keyword Properties - keyword values like class, href etc.Natural Properties - the text value and position.Here, we can wrap our heads around it a bit more easily - it's a tree of nodes and each node consists of: Let's go a bit further and illustrate this: In this basic example of a simple web page source code, we can see that the document already resembles a data tree just by looking at the indentation. Let's start, with a small example page and illustrate its structure: In other words, HTML follows a tree-like structure of nodes (HTML tags) and their attributes, which we can easily navigate programmatically. HTML (HyperText Markup Language) is designed to be easily machine-readable and parsable. To fully understand HTML parsing let's take a look at what makes HTML such a powerful data structure. This example illustrates how easily we can parse web pages for product data and a few key features of beautifulsoup4. "full_price": soup.find(class_="product").find(class_="full").text, $ poetry init -n -dependency bs4 requestsīefore we start, let's see a quick beautifulsoup example of what this python package is capable of: html = """ Or alternatively, in a new virtual environment using poetry package manager: $ mkdir bs4-project & cd bs4-project All of these can be installed through the pip install console command: $ pip install bs4 requests We'll also be using requests package in our example to download the web content. In this article, we'll be using Python 3.7+ and beautifulsoup4. The tool we're covering today - beautifulsoup4 - is used for parsing collected HTML data and it's really good at it. Web scraping is used to collect datasets for market research, real estate analysis, business intelligence and so on - see our Web Scraping Use Cases article for more. In other words, it's a program that retrieves data from websites (usually HTML pages) and parses it for specific data. Web scraping is the process of collecting data from the web.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed